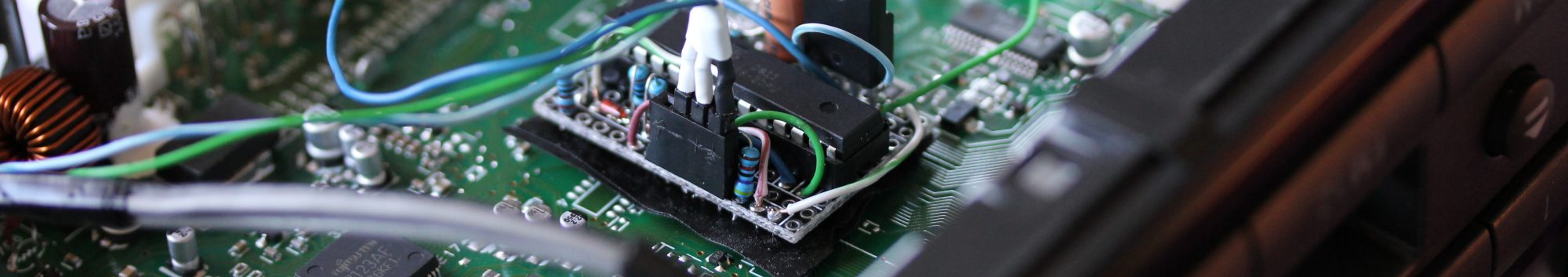

I bought an InkShield from the Kickstarter a few months ago mostly out of a desire to support an interesting Open Hardware project. It wasn’t until yesterday that I thought of something useful to do with it. Instead of that, I made this project, called the Semi-Automatic Paintbrush. Using an infrared camera, an InkShield, an ink cartridge with an infrared LED stuck to it, and your arm, you can copy great works of art, or just any old picture.

The desktop side software involved is called paintbrush.py. It conveniently uses the homography module I wrote a year ago to map what the IR camera sees to the coordinate system of the canvas. The mapping is calibrated interactively by placing the cartridge/LED at each of the four corners of the canvas and pressing a key when prompted. After that, the motion of the LED is tracked, the corresponding region of the image is found, and the script sends serial commands to an Arduino with the InkShield telling it which nozzles to fire at what duty cycle to achieve the correct level of gray, or in this case, green. The painted regions are tracked to prevent flooding.

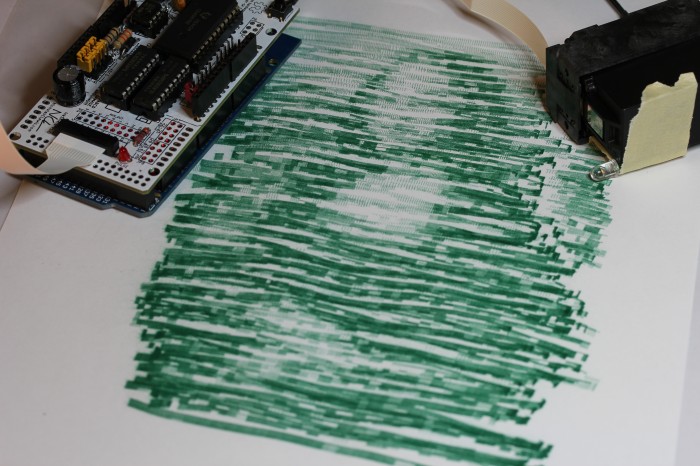

As you can see from the image above, the results are not going to end up in the Louvre, but they do have a kind of partially mechanical, partially organic flavor to them. If you have an InkShield, an IR LED, and a pygame supported IR camera (I use a modified PS3 Eye), and you’re interested in making your own lazy artwork, the script is available on github under an ISC License. The Arduino sketch requires the InkShield library and is LGPL. Usage instructions for the script are contained with it.

Why not using an optical mouse instead of a cam?

An optical mouse tracks relative movement, so error will accumulate over time. Also, if rotation of the mouse isn’t constrained somehow, there will be additional error. You can mitigate the second issue by using two optical mice, but I felt it was easier just to do absolute position tracking with a camera.